The Anthropic Report, the LinkedIn Feed, and What AI Actually Does in Architecture Right Now

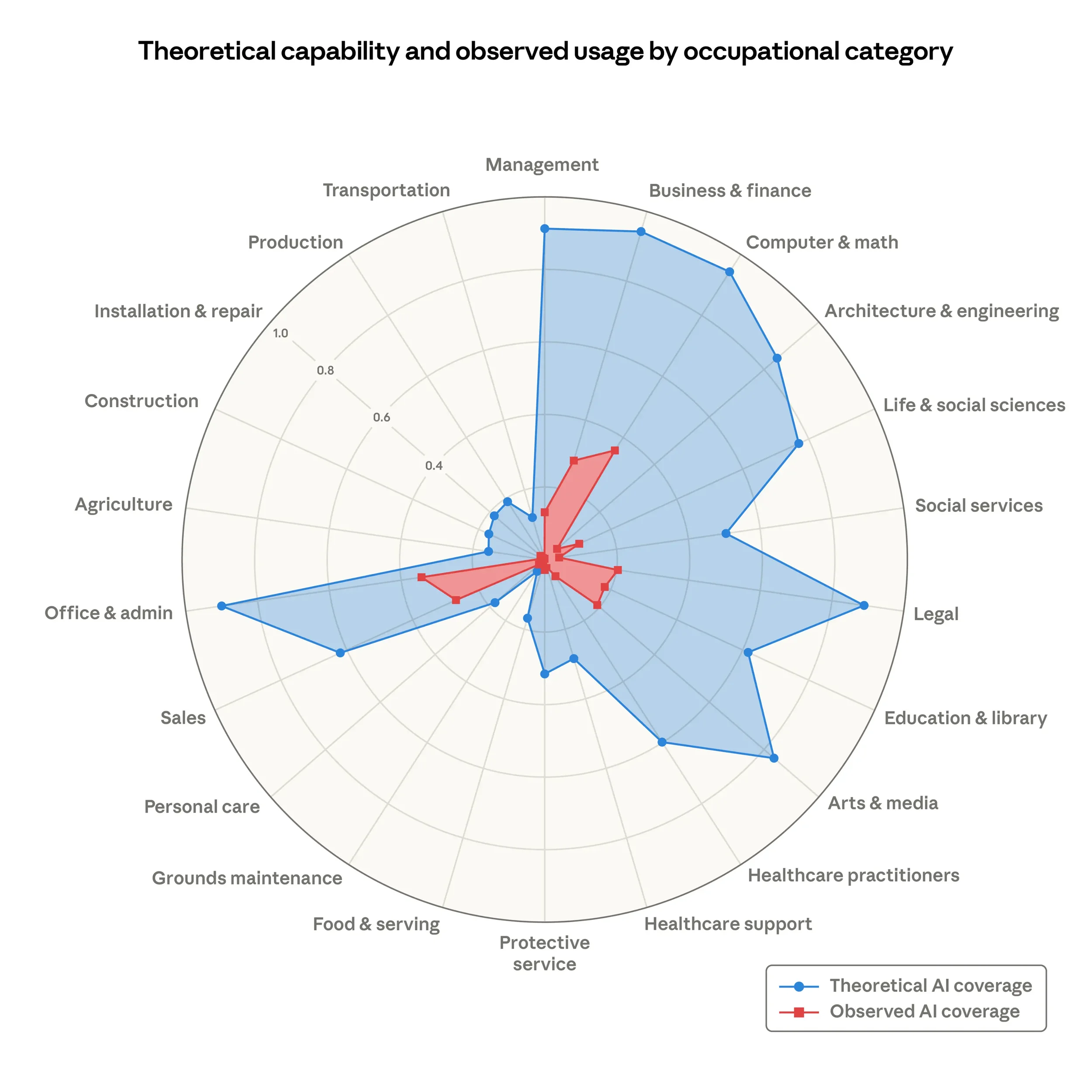

Figure 2: Theoretical capability and observed exposure by occupational category

Share of job tasks that LLMs could theoretically perform (blue area) and our own job coverage measure derived from usage data (red area).

Source: Labor market impacts of AI: A new measure and early evidence

There is an image that has been circulating on LinkedIn for a few weeks. You have probably seen it. It comes from Anthropic's Economic Index report, a study on AI's impact across professions, and it places architecture near the top of fields most exposed to automation. Every time it surfaces, it is posted by someone with "AI strategist" or "future of work" in their bio. The comments section fills quickly, mostly with agreement.

That consensus deserves some examination.

Before we take this at face value

Anthropic is a company that sells AI products. This does not make their research fraudulent, and it does not mean the report should be dismissed. What it does mean is that the report carries an institutional interest in a particular conclusion — one where AI is broadly capable, broadly relevant, and worth adopting. That is a reasonable prior to hold when reading anything they publish. This becomes further important when we look at the way the report is structured, which is, in a way, mainly a document of conclusions. Yes, the full methodology is included, but you are given the starting and end positions, not the in between. This is common in industry research, but it also means that the image being shared endlessly is a further simplified claim from a summary document based on a process that you do not have access to, published by a company with a stake in how you interpret it.

None of this makes it wrong. It does mean it warrants scrutiny rather than circulation.

The second issue is the information loop itself. A striking image, backed by an institutional name, gets picked up and shared by people whose professional identity is tied to AI adoption. Each share adds perceived weight. By the time it has crossed your feed three times, it reads like an established fact. This is not a conspiracy — it is just how attention works online. But it is worth naming. We have seen this sort of campaign again and again, particularly in elections and general politics. A piece of information is drafted in a striking manner, pushed by a small number of publications with a good image, and then gets picked up verbatim by countless others.

What the report actually says about architecture

Not much, beyond the classification itself. Architecture appears in a high-exposure category. No mechanism is explained. No distinction is made between the phases of architectural work, the scale of practice, or the difference between a feasibility study and a construction document set. The image does the work; the report does not do much more.

When I first saw this, I was very curious to read about how this impact was established, but there is nothing there, and the way these conclusions are drafted does not give me much to go on. The methodology also does not help me as it is general and not particular to our field.

What AI can currently do in architectural practice

This is written from the position of a studio that has been implementing AI tools in live practice for the past one to two years — not experimentally, but operationally, with real projects and real constraints. The following is what that experience has produced.

The tools that exist today fall into three categories: visualisation, feasibility, and support. All three are concentrated in the schematic design phase, which is the earliest and, in terms of total project effort, the smallest part of the architectural process.

Visualisation. There are two types of visualisation tools in current use: real-time renderers connected to BIM software, and high-end professional tools used by dedicated visualisation studios. AI image generation is a credible replacement for the first category. The outputs are generally competent — serviceable for client communication, fast to produce, and accessible without specialist skills. The limitations are also there: granular control over light, material, and composition remains inferior to traditional software, and consistency across a full image set is unreliable due to hallucination between generations. For a promotional image campaign, you are not there yet. For a schematic design presentation, you probably are.

Feasibility. Tools like Forma and Spaceio operate at the massing and site analysis level. They are useful for large-scale feasibility work — solar exposure, density calculations, gross floor area studies. They serve a specific part of the market: larger firms running early-stage analysis on significant sites. For most residential or small commercial practices, they are either inaccessible by cost or irrelevant by scope.

Support. A range of tools assist with discrete tasks — generating 3D assets, drafting text, and basic specification research. These are useful in the way that any competent assistant is useful: they reduce time on low-value tasks. They do not change the nature of the work.

That is the current landscape. Everything past schematic design — design development, construction documentation, technical coordination, detailing — remains a manual process.

The experiments that did not work

Three attempts from inside the studio are worth documenting because they illustrate where the ceiling actually is.

The first was a construction document review tool. The goal was straightforward: upload a PDF drawing set, provide project context, and have an AI agent flag clashes, missing labels, dimensioning errors, and graphical inconsistencies. The agent was built using Claude via an N8N workflow. After two weeks of testing, it was abandoned. The core problem is that large language models are built on language. They do not understand a drawing. They can read text within a drawing, but spatial relationships, graphical conventions, and the logic of a construction set are not legible to them in any reliable way. The concept was sound. The technology is not there.

The second was a direct MCP connection between Claude and Archicad, intended to function as a design assistant operating inside the modelling environment. The connection was established. Claude can execute Python. The problems began immediately: misinterpreted requests, an unstable connection, and outputs that required more correction than they saved. There is a theoretical case for this kind of tool at scale, with sufficient reliability. The threshold for useful reliability in a live project environment is high — you need to trust it enough to reduce checking, not increase it. That threshold was not reached.

The third was a Claude-to-Archicad pipeline via Grasshopper. This one was closed quickly. The pipeline would have been structurally fragile outside of rigid, repeatable workflows, which architectural design is not. More fundamentally, the model does not understand a PLN file. The tool itself advised against the approach.

Why the gap exists

The limitation running through all of this is not processing power or training data volume. It is that large language models are, structurally, language tools. Architectural work — past the point of early concept — is a spatial, technical, and relational discipline. It involves understanding how a set of drawings communicates a building to a contractor, how a detail fails under certain conditions, and how a building performs across its systems. These are not language problems.

The tools being built for architecture are largely being built by people who understand AI and have learned some architecture. The tools that would actually move the needle would need to be built by people who understand architecture and have learned some AI. That distinction matters more than it might appear.

A possible future

The most reliable way to anticipate where a technology goes is to look at where it has been — and at the closest precedent we have in this field.

BIM was genuinely transformative. It reduced drafting time, introduced spatial logic into the production process, and changed how architects think about documentation. What it did not do was reduce the total hours worked. The time freed by BIM adoption was absorbed by added complexity — more detailed coordination, more thorough technical development, and higher client expectations. You stopped spending eight hours on drawings and started spending six hours on drawings and two hours on harder problems. The net remained roughly the same.

If AI follows a similar trajectory — and current evidence suggests it might — the gains will be real but bounded. Visualisation becomes faster. Certain early-stage analyses become more accessible. Some administrative overhead decreases. But the total hours of architectural thinking required to deliver a building well are unlikely to fall significantly, because the complexity is not incidental to the work. It is the work.

There is also the question of what AI is actually getting better at. On the technical side — understanding spatial relationships, reading construction logic, interpreting a drawing as a drawing — the improvement curve has been slow. The outputs look more convincing. The underlying comprehension has not moved at the same pace. That gap matters when the question is not "can AI produce an image that looks like architecture" but "can AI understand what a building needs to be."

The most defensible position, given what the technology currently does and the rate at which it is improving in the areas that matter to practice, is this: AI will be a capable and increasingly useful tool operating alongside the architect, within existing software environments and workflows. It is unlikely, on any near-term horizon, to restructure the core of how architectural work is done.

Where this leaves the report

The Anthropic Economic Index is not wrong that AI will affect architecture. It already has, in the areas described above. What the report does not — and perhaps cannot — do is tell you which parts of architecture, on what timeline, and with what actual impact on how practices operate and how architects work.

The image being shared on LinkedIn tells you that architecture is exposed. It does not tell you that a residential architect's core work is currently automatable, because it is not. It does not tell you that the tools exist to replace construction documentation, technical design, or project coordination, because they do not.

Healthy scepticism about vendor-published research is not anti-technology. It is just good reading practice. The more interesting question — what would AI need to be able to do to actually change how architectural practice works — is one the field would benefit from asking out loud, with specificity, rather than sharing an image and calling it a conversation.